Episode 6 of 40

In the previous episode, we saw how our digital intern improves by learning from mistakes. But even with constant feedback, there is still a fundamental problem.

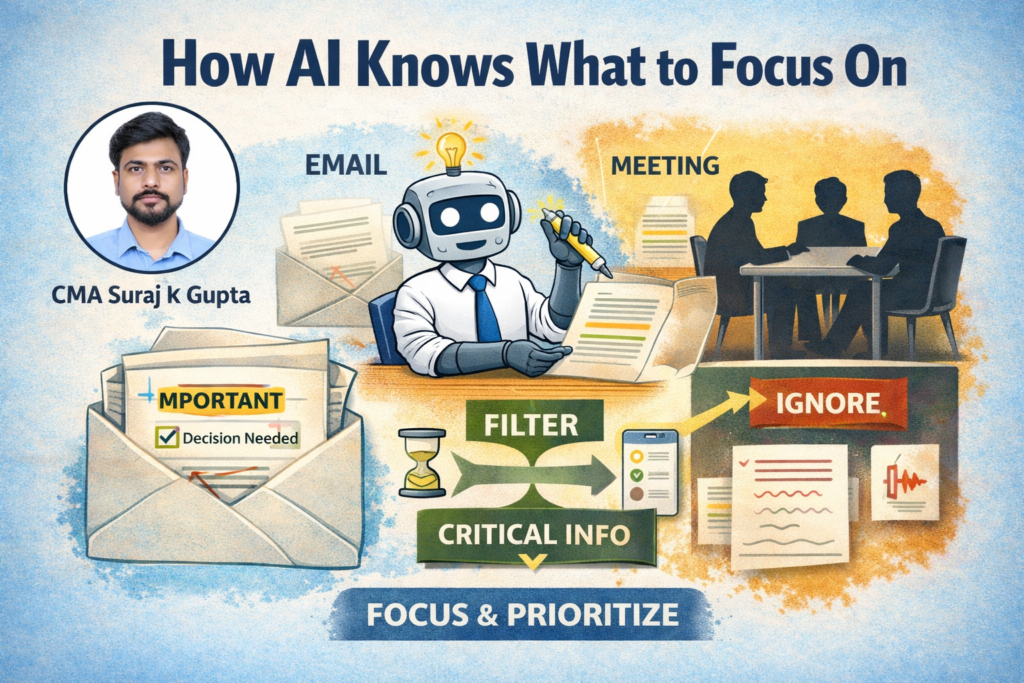

Not all information is equally important.

Imagine you hand your intern a long email.

Somewhere inside it is a single critical line — a deadline, a key decision, or an action item. The rest of the email is just context, background, or noise.

If the intern treats every sentence equally, they will likely miss what actually matters.

This is where a good teacher makes all the difference.

A teacher does not just give information. They guide attention.

They show the student what to focus on and what to ignore.

Now think about your own workday.

You sit through a 30-minute meeting. But in reality, only five minutes contain the decisions that truly matter. The rest is discussion, clarification, or repetition.

The real skill is not processing more information.

It is filtering the right information.

This is exactly what modern AI systems have learned to do.

Instead of treating every word equally, they assign different levels of importance to different parts of the input. Words that are more relevant to the task receive more attention, while less important ones are given lower priority.

This allows the system to understand context far more effectively.

In artificial intelligence, this breakthrough is known as Transformers and the Attention Mechanism.

It is one of the most important innovations in modern AI.

It is the reason systems today can:

- summarize long documents

- answer complex questions

- generate context-aware responses

Instead of simply reading everything, the model learns to focus.

And just like in the workplace, knowing what to ignore is often more valuable than knowing more.

In the next episode, we will explore a practical question that every business eventually faces: what it actually costs to run this powerful digital intern.

Director’s Quick Brief

Key Concept

Transformers and the Attention Mechanism

Simple Definition

A method that allows AI to focus on the most important parts of information instead of treating everything equally.

Real-world Example

While reading a long email, you naturally focus on deadlines, key decisions, and action items, while ignoring less important details.

Playbook Progress

Season 1 – Raising the Intern

Episode 6 of 7