Episode 18 of 40

In the previous episode, we explored how the quality of instructions shapes AI output. But this raises an important question:

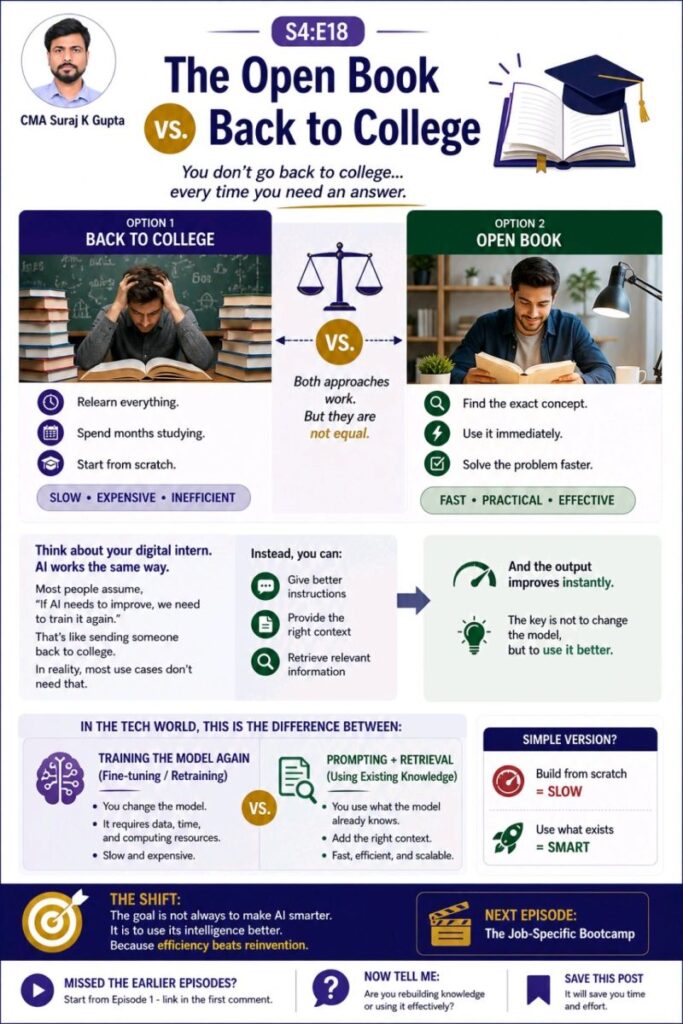

Should AI always be trained again to improve?

Consider a simple situation.

You are trying to solve a problem.

You have two choices.

The first option is to go back to college. You relearn everything, spend months studying, and rebuild your knowledge from the ground up.

The second option is to open a book. You locate the exact concept you need, apply it immediately, and solve the problem efficiently.

Both approaches can work. However, they are not equal.

One is time-consuming and resource-intensive.

The other is fast, practical, and effective.

AI operates in a similar way.

A common assumption is that if AI needs to perform better, it must be trained again. In reality, most use cases do not require retraining the model.

Instead, performance can often be improved by:

- providing clearer instructions

- adding relevant context

- retrieving the right information at the right time

This leads to a more efficient approach.

Rather than changing the model itself, the focus shifts to using its existing intelligence more effectively.

In practice, this distinction can be summarized as:

- Training the model again: slower, more expensive, resource-heavy

- Using existing knowledge: faster, more practical, scalable

The key insight is simple.

The objective is not always to make AI smarter.

It is to use its intelligence better.

Director’s Quick Brief

Key Concept

Using existing knowledge vs retraining the model

Simple Definition

Improving AI performance by providing better instructions and context instead of retraining it.

Real-world Analogy

Choosing between going back to college or simply opening a book to solve a problem.

Playbook Progress

Season 4 – Training & Managing the Daily Work

Episode 18 of 24